Last Updated on July 6, 2023 by Mayank Dham

ANNs are a type of machine learning model inspired by the structure and operation of the human brain. By allowing computers to learn and predict from complex data patterns, they have transformed many fields. We will delve into the world of Artificial Neural Networks in this article, investigating their applications, benefits, and drawbacks, as well as understanding the underlying principles of their operation.

What is Artificial Neural Network?

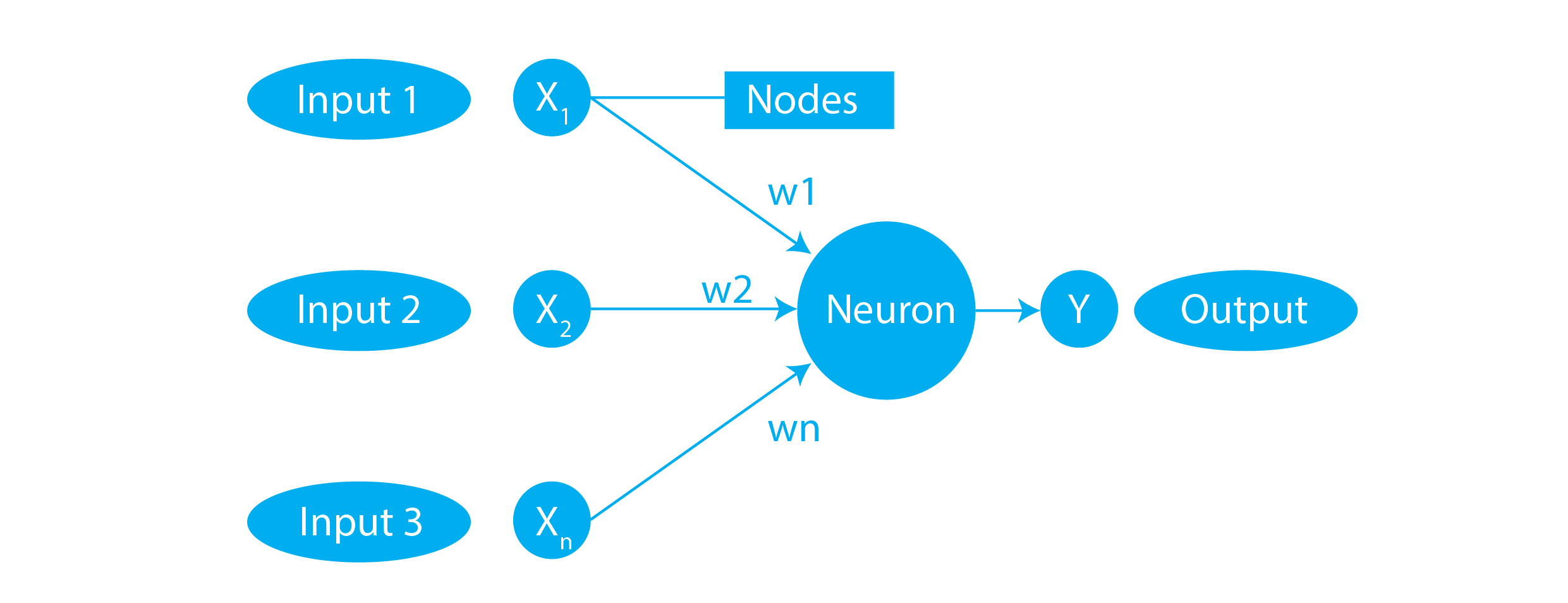

Artificial Neural Networks are composed of interconnected nodes, called artificial neurons or "neurons," organized in layers. The network receives inputs, processes them through a series of interconnected neurons, and produces outputs. Each neuron applies mathematical operations to its inputs, often involving activation functions, to produce an output that is passed to the next layer.

The typical Artificial Neural Network looks like the figure below.

How Artificial Neural Networks Work?

Artificial Neural Networks (ANNs) are composed of interconnected nodes, called neurons, organized in layers. The network takes input data, processes it through the layers of neurons, and produces an output.

1. Neurons and Connections:

Each neuron in an ANN receives input values from the previous layer or directly from the input data. These inputs are multiplied by connection weights, which represent the strength or importance of each connection. The weighted inputs are then summed, and an activation function is applied to produce an output.

2. Activation Function:

The activation function introduces nonlinearity to the neuron’s output. Common activation functions include the sigmoid function, ReLU (Rectified Linear Unit), and tanh (Hyperbolic Tangent).

3. Layers:

ANNs typically consist of three types of layers: input layer, hidden layer(s), and output layer. The input layer receives the input data, and each neuron represents a feature or attribute of the input. The hidden layers process the information by performing mathematical operations on the input data, applying activation functions, and passing the results to the next layer. Finally, the output layer produces the network’s final output, which could be a classification label, a numeric value, or a prediction.

4. Forward Propagation:

During forward propagation, the input data flows through the network layer by layer. Each neuron’s output becomes the input for the neurons in the next layer. This process continues until the output layer produces the final result.

5. Training and Backpropagation:

Training an ANN involves adjusting the connection weights to minimize the difference between the network’s predicted output and the desired output. This is achieved through a process called backpropagation. The steps of backpropagation are as follows:

- Forward Propagation: Input data is fed into the network, and the output is computed.

- Calculation of Error: The difference between the predicted output and the actual output is calculated.

- Backward Propagation: The error is propagated backward through the network. The weights of the connections are updated in proportion to their contribution to the error, using optimization algorithms such as gradient descent.

- Iterative Learning: Steps a to c are repeated iteratively on different examples from the training dataset, allowing the network to adjust the weights and learn from the patterns in the data.

6. Prediction:

Once trained, an ANN can be used to make predictions on previously unseen data. The input is passed through the network, and the output layer predicts the outcome based on the learned patterns and weights.

By adjusting the weights and biases of the connections between neurons, ANNs can learn complex patterns and relationships in data, enabling them to perform tasks such as classification, regression, and pattern recognition. The network’s structure, number of layers, number of neurons, and choice of activation functions are key design considerations that impact its learning capabilities and performance on specific tasks.

Advantages of Artificial Neural Networks

- Learning from Complex Data: ANNs can learn from large and complex datasets, extracting intricate patterns that may not be apparent to humans.

- Adaptability and Generalization: ANNs can adapt to new data and generalize their learnings to make predictions on unseen inputs.

- Fault Tolerance: ANNs exhibit fault tolerance, as they can continue functioning even if some neurons or connections are damaged or lost.

- Parallel Processing: ANNs can perform parallel processing, enabling efficient computation and faster learning.

- Nonlinearity: ANNs can model and capture nonlinear relationships in data, making them suitable for solving complex problems.

Disadvantages of Artificial Neural Networks

- Black Box Nature: ANNs often lack interpretability, making it challenging to understand and explain the reasoning behind their predictions.

- Data Requirements: ANNs require a significant amount of labeled training data to learn effectively, which may be time-consuming and costly to acquire.

- Computational Complexity: Training ANNs can be computationally intensive, especially for large and deep networks, requiring substantial computing resources.

- Overfitting: ANNs are prone to overfitting, wherein they may memorize training data instead of learning generalizable patterns, leading to poor performance on unseen data.

- Hyperparameter Tuning: ANNs involve tuning various hyperparameters, such as the number of layers, neurons, and learning rates, which can be challenging and time-consuming.

Applications of Artificial Neural Networks

Artificial Neural Networks find applications in various fields, including:

- Pattern Recognition: ANNs excel at recognizing patterns in images, speech, handwriting, and other complex data, making them valuable in fields like computer vision, speech recognition, and natural language processing.

- Prediction and Forecasting: ANNs can be used to predict future trends, market behavior, stock prices, weather patterns, and other time-series data.

- Medical Diagnosis: ANNs have been applied to aid in medical diagnoses, analyzing symptoms, and predicting diseases based on patient data.

- Financial Analysis: ANNs are used in financial institutions for credit scoring, fraud detection, and stock market analysis.

- Robotics and Control Systems: ANNs play a crucial role in enabling robots and control systems to adapt, learn, and make decisions based on sensor data and environmental feedback.

Conclusion

Artificial Neural Networks have revolutionized the field of machine learning and have found widespread applications across various industries. Their ability to learn from complex data, adaptability, and capability to recognize patterns make them a powerful tool for solving a wide range of problems. However, they also have their limitations, including interpretability issues and the need for substantial computational resources and labeled training data. Understanding the applications, advantages, disadvantages, and underlying principles of Artificial Neural Networks is crucial for leveraging their potential and making informed decisions when applying them to real-world problems.

Frequently Asked Questions (FAQs)

Q1. What is the difference between a neuron and a node in an Artificial Neural Network?

A neuron and a node refer to the same concept in an Artificial Neural Network. They represent an artificial computational unit that receives input, processes it, and produces an output.

Q2. How do Artificial Neural Networks learn from data?

Artificial Neural Networks learn from data through a process called backpropagation. During training, the network adjusts the connection weights between neurons based on the error between the predicted output and the actual output. This iterative process helps the network learn and improve its predictions.

Q3. Can Artificial Neural Networks handle categorical data?

Yes, Artificial Neural Networks can handle categorical data. Categorical variables can be one-hot encoded or transformed into numerical representations before being used as input to the network. This allows the network to learn patterns and make predictions based on the categorical features.

Q4. What is the role of the activation function in an Artificial Neural Network?

The activation function introduces nonlinearity to the output of a neuron. It determines whether the neuron will be activated or not based on its inputs. Activation functions enable ANNs to model complex relationships and capture nonlinear patterns in the data, enhancing their learning capabilities.

Q5. Do Artificial Neural Networks require labeled training data?

Yes, Artificial Neural Networks generally require labeled training data. Labeled data consists of input samples along with their corresponding correct outputs. During training, the network compares its predicted output with the actual output to compute the error and adjust the weights accordingly. Labeled data helps the network learn and generalize patterns for making accurate predictions.